HTTP Request and Postgres integration

Save yourself the work of writing custom integrations for HTTP Request and Postgres and use n8n instead. Build adaptable and scalable Development, Core Nodes, workflows that work with your technology stack. All within a building experience you will love.

How to connect HTTP Request and Postgres

Create a new workflow and add the first step

In n8n, click the "Add workflow" button in the Workflows tab to create a new workflow. Add the starting point – a trigger on when your workflow should run: an app event, a schedule, a webhook call, another workflow, an AI chat, or a manual trigger. Sometimes, the HTTP Request node might already serve as your starting point.

Popular ways to use the HTTP Request and Postgres integration

Generate Instagram content from top trends with AI image generation

AI agent for realtime insights on meetings

WordPress - AI chatbot to enhance user experience - with Supabase and OpenAI

🤖 Advanced Slackbot with n8n

Youtube outlier detector (find trending content based on your competitors)

Scrape Google Maps by area & generate outreach messages for lead generation

Build your own HTTP Request and Postgres integration

Postgres supported actions

Delete

Delete an entire table or rows in a table

Execute Query

Execute an SQL query

Insert

Insert rows in a table

Insert or Update

Insert or update rows in a table

Select

Select rows from a table

Update

Update rows in a table

HTTP Request and Postgres integration details

HTTP Request and Postgres integration tutorials

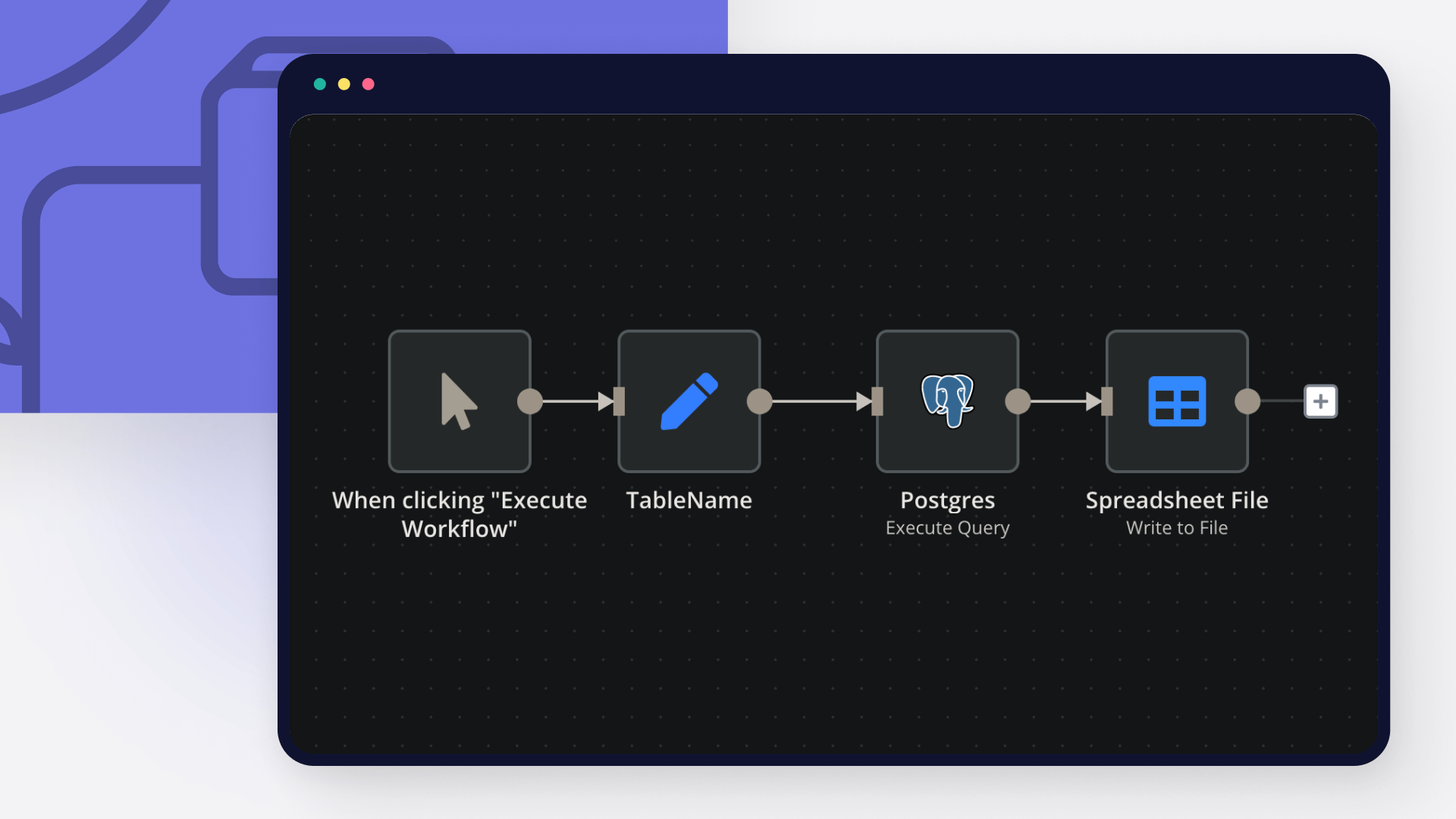

How to export data from PostgreSQL to CSV

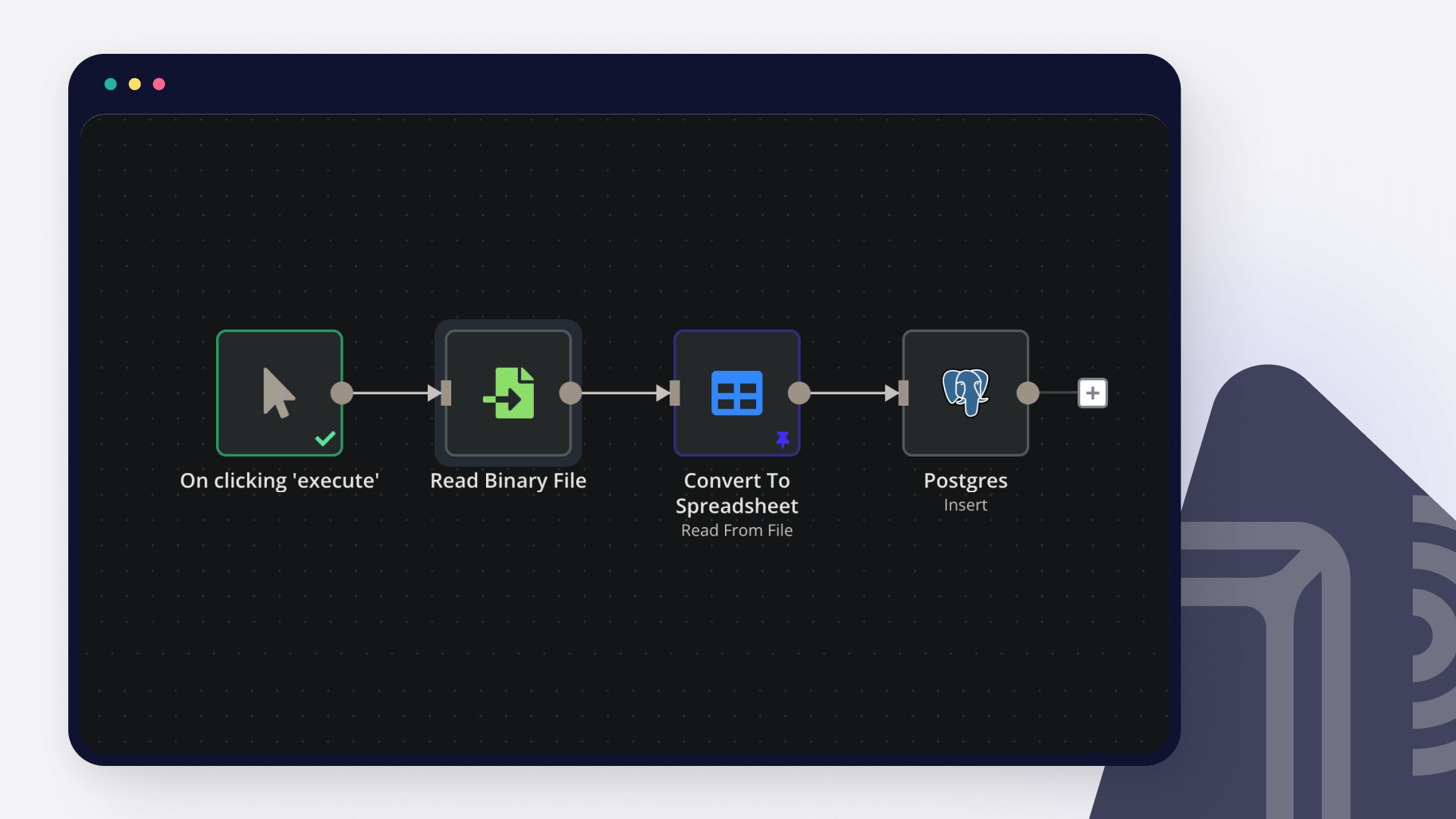

How to import CSV into PostgreSQL

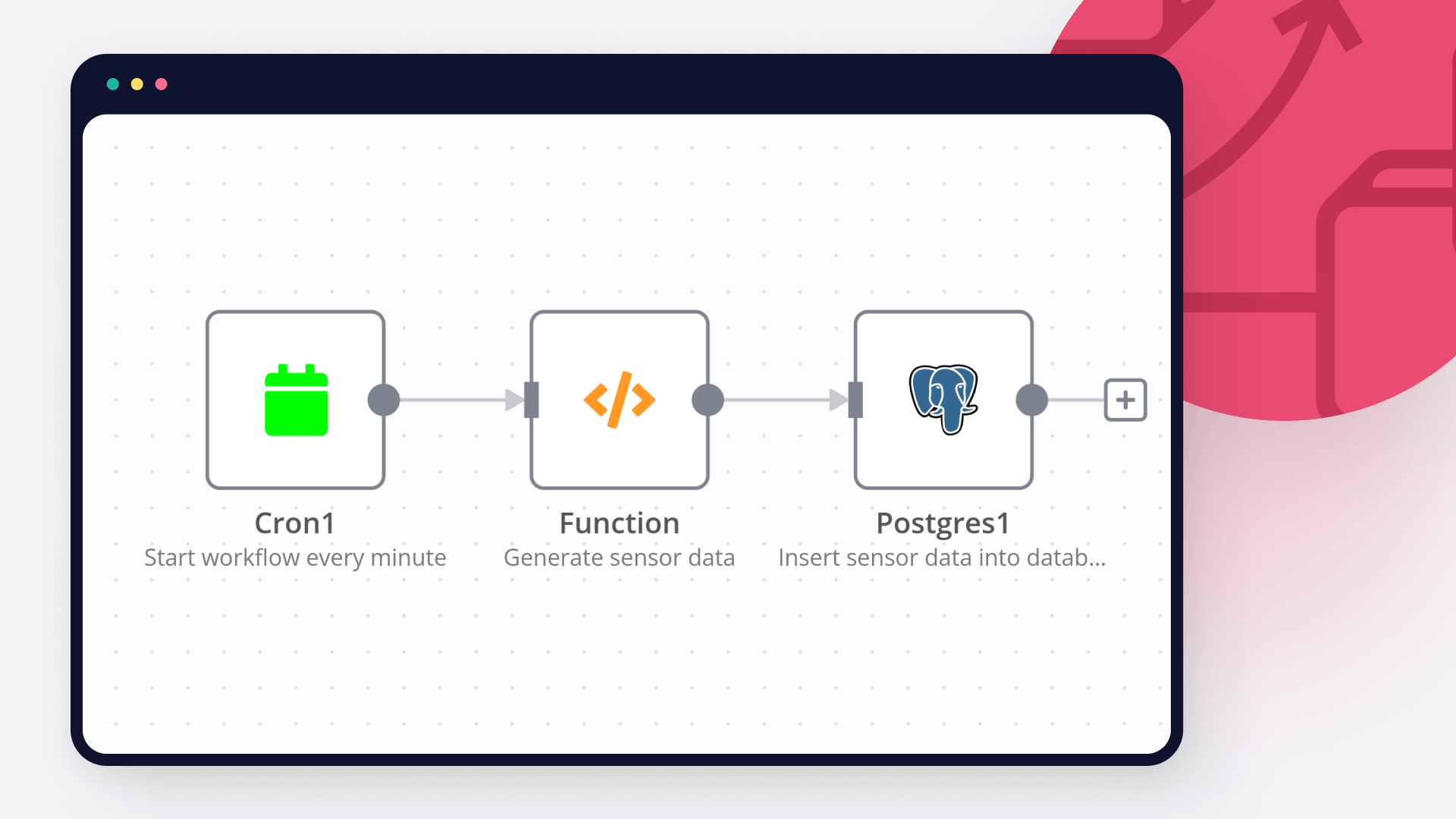

Database activity monitoring: How to automatically monitor and set alerts for a database

Automate your data processing pipeline in 9 steps

FAQ

Can HTTP Request connect with Postgres?

Can I use HTTP Request’s API with n8n?

Can I use Postgres’s API with n8n?

Is n8n secure for integrating HTTP Request and Postgres?

How to get started with HTTP Request and Postgres integration in n8n.io?

Need help setting up your HTTP Request and Postgres integration?

Google Verification Denied

Moiz Contractor

Describe the problem/error/question Hi, I am getting a - Google hasnt verified this app error. I have Enable the API, the domain is verified on the Cloud Console, the user is added in the search console and the google do…

HTTP request, "impersonate a user" dynamic usage error

theo

Describe the problem/error/question I a http request node, I use a Google service account API credential type. I need the “Impersonate a User” field to be dynamic, pulling data from the “email” field in the previous nod…

Why is my code getting executed twice?

Jon

Describe the problem/error/question I have a simple workflow that retrieves an image from url with http node and prints the json/binary in code. I have a few logs, but I am confused why I see duplicate messages for each …

How to send a single API request with one HTTP node execution, but an array of parameters in it (like emails[all]?)?

Dan Burykin

Hi! I’m still in the beginning. Now I need to make an API call via HTTP node, and send all static parameters, but with the array of emails parameter (named it wrongly just to show what I need {{ $json.email[all] }}). Wo…

Start a Python script with external libraries - via API or Command Execution?

Tony

Hi! I have a question: I am making an app that allows a person to scrape some data via a Python library. I have a Python script that needs to be triggered after certain user actions. What is the best way to: Send a p…

The world's most popular workflow automation platform for technical teams including

Why use n8n to integrate HTTP Request with Postgres

Build complex workflows, really fast